Video Streaming CDN: How to Use CDNs to Deliver Online Video

A video streaming CDN helps online video load faster, play more smoothly, and remain stable when traffic grows. Using a video streaming CDN helps ensure that latency stays low and playback quality stays high, so you can deliver effective webinars or training content with no stress and no buffering. This article explains how CDN streaming works and how to choose a CDN for streaming that fits both technical and business requirements.

Modern video streaming delivery with CDNs

- A video streaming CDN delivers video from edge servers which are located closer to viewers.

- A single origin server cannot reliably support large global video audiences.

- Modern video streaming delivery depends on caching and adaptive bitrate streaming.

- The right CDN improves speed, scalability, and playback consistency.

Video streaming CDN basics: What it does and why it matters

A video streaming CDN is a content delivery network designed to distribute video through edge servers located across multiple regions. With a CDN every request doesn’t need to go back to one origin server; the CDN stores cached copies of video content, then it’s delivered from the available location that is closest to the request. Video creates much heavier traffic than most web content—a web page loads once, but video must deliver data continuously for the full duration of playback. As a result, if delivery slows down viewers will notice buffering immediately. A CDN helps to protect you against this.

Latency is one of the biggest challenges in video delivery because every extra network hop affects startup time and playback stability. The farther a viewer is from the origin server, the longer data takes to arrive. More distance usually means more congestion and less predictable performance. A CDN reduces that delay by shrinking the gap between the viewer and the content. Instead of crossing continents for every segment request, viewers receive video from nearby edge infrastructure.

This becomes even more important with HD, 4K, and live streaming, where each session requires large amounts of bandwidth over time. Check out FlashEdge’s blog article for a more in-depth look at network latency.

CDN stream benefits: Why video streaming needs a CDN

Streaming directly from one origin server can work for small internal audiences or early testing, but when traffic grows it quickly becomes unreliable.

Every origin server has limits:

- bandwidth capacity

- simultaneous connections

- processing power

- geographic reach

When many viewers request the same content simultaneously, those limits manifest as slow startup, unstable playback, bitrate drops, or buffering.

A CDN stream solves this by moving repeated delivery away from the origin and into distributed cache locations. The first request may reach origin, but later requests are served locally through the CDN.

This reduces pressure on origin infrastructure during traffic spikes and makes delivery more resilient under heavy demand. That resilience is crucial during webinars, product launches, live broadcasts, and online events where lots of viewers start watching at the same time.

Without a CDN, video platforms often need to overbuild origin capacity just to survive short periods of peak demand, which leads to overspending on bandwidth even though it isn’t necessary most of the time.

CDN streaming advantages for global video delivery

The clearest benefit of CDN streaming is faster playback startup because edge servers deliver video segments more quickly than a distant origin.

That improves:

- startup speed

- playback continuity

- bitrate stability

- mobile viewing performance

A CDN also improves video streaming delivery across regions where network quality varies significantly. A viewer in one region shouldn’t need to depend on infrastructure located far away.

Caching also lowers origin load, which reduces bandwidth usage and helps control backend costs over time.

For growing platforms, both performance and cost factor into the decision to use a CDN.

CDNs for streaming: How video streaming CDNs work

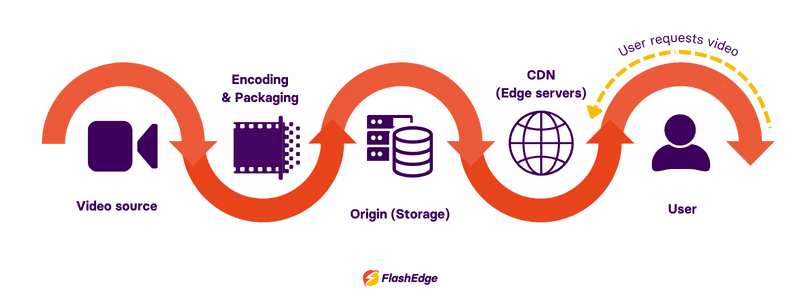

Step 1: Video is uploaded to the origin

The original file is stored on an origin server or cloud storage platform. It is usually prepared in multiple formats and bitrates so different devices and connection speeds can receive suitable versions.

This often includes:

- multiple resolutions

- multiple bitrate levels

- segmented delivery files

Preparing multiple versions of the same video allows adaptive delivery without interrupting playback.

Step 2: The first viewer triggers caching

When the first viewer requests a video, the CDN retrieves segments from the origin and stores them temporarily in cache. Modern streaming usually caches small segments rather than full files, which improves flexibility and adaptive delivery.

Step 3: Edge servers deliver content locally

Once cached, viewers receive those same segments from nearby edge servers.

This is where a CDN for streaming creates the biggest performance gain—lower latency, fewer repeated origin requests, and more stable playback under growing demand.

Video streaming CDN use cases

A video streaming CDN supports any environment where video traffic needs to remain stable under load.

- Live event broadcasting: Webinars, sports streams, conferences, and launches often create sudden simultaneous demand.

- Video on demand: Popular content benefits from repeated edge caching and faster local delivery.

- E-learning platforms: Educational video often serves users across multiple countries where stable playback directly affects engagement.

- Internal enterprise video: Training sessions, recorded meetings, and internal broadcasts increasingly depend on distributed delivery.

CDN for streaming: How to use it effectively

Getting CDN streaming right starts before the first request is made. The video file itself needs to be prepared in a way that works with how CDNs cache and serve content.

Preparing video files for CDN delivery

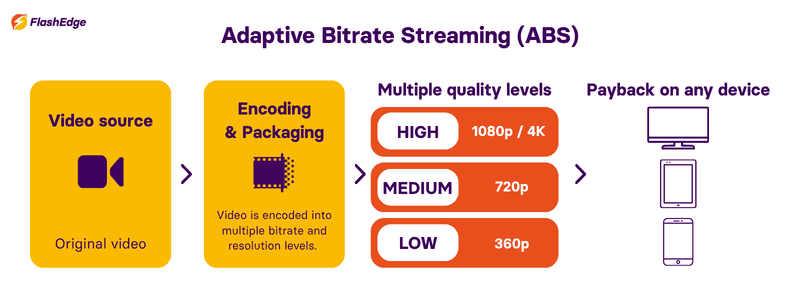

A raw video file cannot be streamed directly. It gets encoded into a delivery codec– H.264 is the most widely supported, while H.265/HEVC offers better compression with slightly narrower device compatibility. Once encoded, the file is packaged for adaptive bitrate streaming using either HLS or MPEG-DASH. Both protocols split the video into short segments, typically 2 to 6 seconds each, alongside a manifest file that tells the player where to find them.

The video is encoded at multiple quality levels–360p, 720p, 1080p, 4K. The player picks the right variant based on available bandwidth and switches mid-playback if conditions change. The CDN just needs to deliver whichever segment the player requests, quickly.

How origin and edge servers divide the work

The origin server stores the master content: all encoded variants, manifests, and segments. In a CDN streaming setup, it rarely talks to end users. It talks to edge servers.

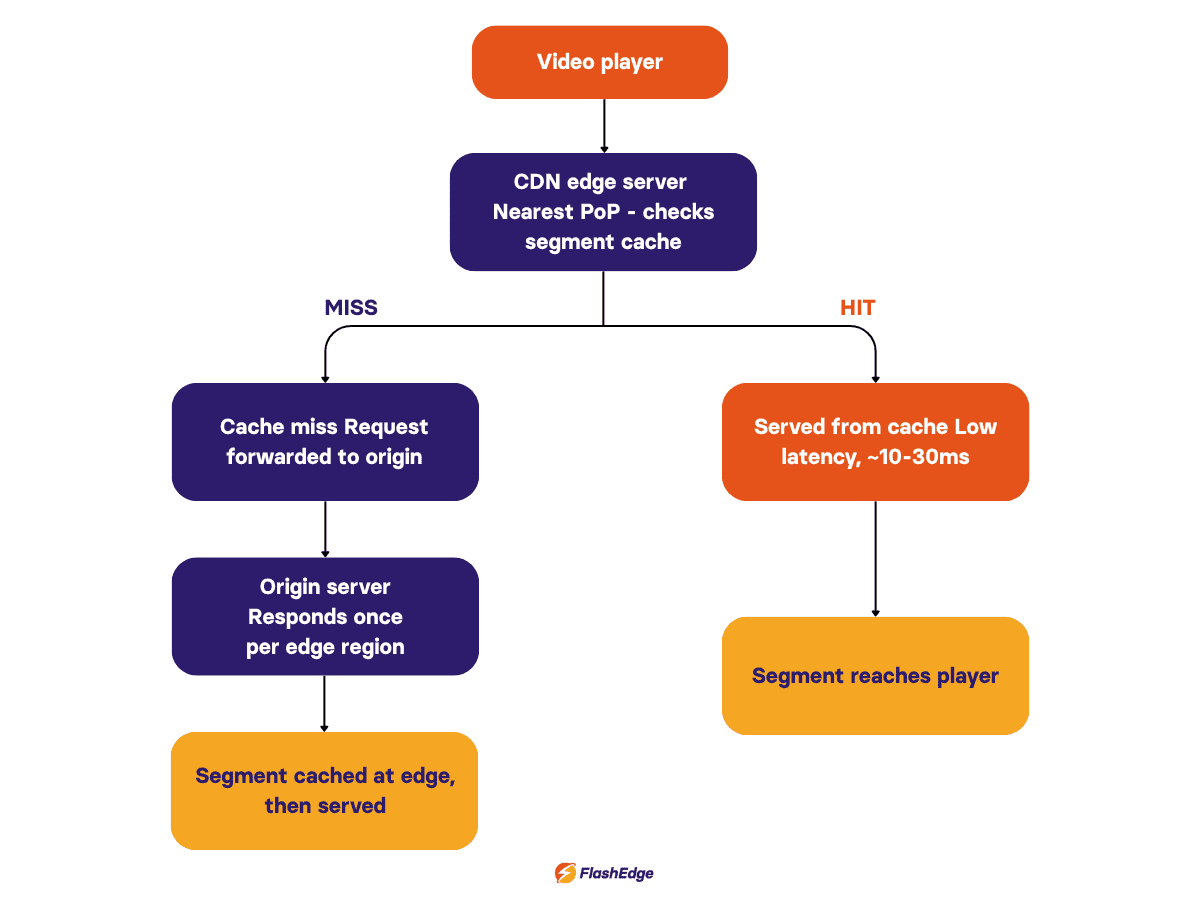

Edge servers (PoPs) are the CDN's distributed nodes, placed close to viewers worldwide. When a viewer presses play, the request goes to the nearest edge. If the segment is cached, it reaches the viewer in milliseconds. If not, the edge fetches it from the origin, caches it, and serves it. The next viewer in that region gets it from cache.

For live streaming, segments are short-lived and continuously replaced so cache TTLs are kept tight, often matching the segment duration itself.

The CDN stream request flow

When a viewer hits play, the player fetches the manifest first–a small text file listing quality levels and segment URLs. For live streams, the manifest updates frequently and carries a short TTL.

The player then requests video segments. On a cache hit, the edge responds directly. On a cache miss, the edge pulls the segment from origin and caches it for subsequent viewers. As playback continues, the player buffers several segments ahead. A 15 to 30 second buffer means a brief CDN delay will not interrupt playback.

CDN streaming reduces latency and prevents buffering

Buffering comes from one of two things: the viewer's network cannot sustain the bitrate, or segments are not arriving fast enough. The CDN fixes the second problem. Without CDN streaming, all requests hit a single origin that may be thousands of kilometers away, and round-trip time adds up across every fetch.

An edge server 20ms away consistently beats an origin 200ms away. Across the first few segment fetches at startup, that gap is noticeable. For live streams, edge proximity also tightens end-to-end latency, keeping the stream closer to actually live.

Scaling video streaming delivery under peak load

A single origin has hard limits on concurrent connections and bandwidth. A live event can generate tens of thousands of segment requests per second – one server cannot absorb that.

With a CDN, each edge PoP handles its own region independently. A segment cached across 50 locations means the origin only handles the first fetch per location, not one request per viewer. Once cache coverage is established, the origin steps out of the picture. That is what makes video streaming delivery at scale practical – not just faster, but achievable without oversized origin infrastructure.

Protecting CDN streams with token authentication

Caching segments at the edge does not protect them by default. Anyone with a segment URL can fetch it freely. Most CDN platforms handle this with token-based authentication: a signed, time-limited token embedded in the URL is validated at the edge before content is served. Tokens use HMAC signing and can be restricted by expiry time, IP address, or path.

This does not replace DRM for premium content, but it blocks unauthorized access at the delivery layer without any changes to the player or video files.

CDN stream selection: How to choose a provider

When evaluating a CDN stream provider, focus on measurable delivery quality.

- Check edge coverage

- Compare latency

- Confirm adaptive streaming support

- Review pricing carefully

The best CDN choice depends on where your audience is located and how predictable your traffic patterns are.

Try a CDN for video streaming with FlashEdge

FlashEdge supports workloads where low latency, efficient caching, and reliable delivery directly affect viewer experience.

FlashEdge places video segments closer to users, and ensures those segments are already cached before demand peaks.

This means:

- viewers start playback faster because the first segments are served from a nearby edge node

- fewer interruptions occur because segments don’t need to travel long distances during playback

- origin servers receive fewer repeat requests, reducing overload risk during traffic spikes

FlashEdge also uses real-time routing–if one edge location is under heavy load, traffic is automatically redirected to a healthier node, which ensures reliability even during live events with thousands of viewers joining at the same time.

Another key benefit is segment-level caching. Instead of caching full video files, FlashEdge stores small chunks used in adaptive streaming. This improves bitrate switching, so viewers on unstable connections are less likely to experience stalls.

If you’d like to learn more about how a CDN could affect your business, book a call with one of our representatives for practical advice that is tailored to your architecture.

Frequently Asked Questions

What is a video streaming CDN?

A video streaming CDN stores and delivers video from edge servers closer to viewers.

Why is a CDN important for video streaming?

It reduces latency, buffering, and origin server load.

Can a CDN improve live streaming?

Yes. It distributes simultaneous requests across edge servers.

Which protocol is most common for CDN streaming?

HLS remains the most widely supported format.

How does a CDN reduce buffering?

By serving video from a nearby edge server instead of a distant origin, reducing latency and network congestion.

Does a CDN improve startup time?

Yes. Cached segments at the edge allow playback to begin faster.

What is a cache miss?

When content isn’t yet stored at the edge, it is fetched from origin and cached for future viewers.

Does a CDN lower costs?

Yes. Fewer origin requests reduce bandwidth usage and infrastructure strain.

Ready to start your journey to low latency and reliable content delivery?

If you’re looking for an affordable CDN service that is also powerful, simple and globally distributed, you are at the right place. Accelerate and secure your content delivery with FlashEdge.

Get a Free Trial